Building a Player Tracking Pipeline for NFL All-22 Film

The NFL doesn’t make its coaching film public. You need a paid NFL Pro subscription, and even then the video lives behind an authenticated HLS stream. Getting a raw MP4 out of it is already a project on its own.

Once I solved that part, I wanted to actually do something useful with the footage. Specifically, I wanted to track individual players through a full play. Where is Caleb at every frame. How does he move pre-snap. When does he break the pocket. That kind of thing. Route data, basically, but extracted from film instead of being manually charted.

Why SAM3

SAM3 is Meta’s Segment Anything Model 3. What makes it useful here is the text-prompt interface. You pass it a frame and a string like “football player” and it detects and segments every matching object in the image. No training data, no fine-tuning, no labeled NFL dataset required.

The alternative was training YOLO on football footage and hoping it generalizes. SAM3 skips all of that.

The Two-Phase Pipeline

The full thing runs in two phases.

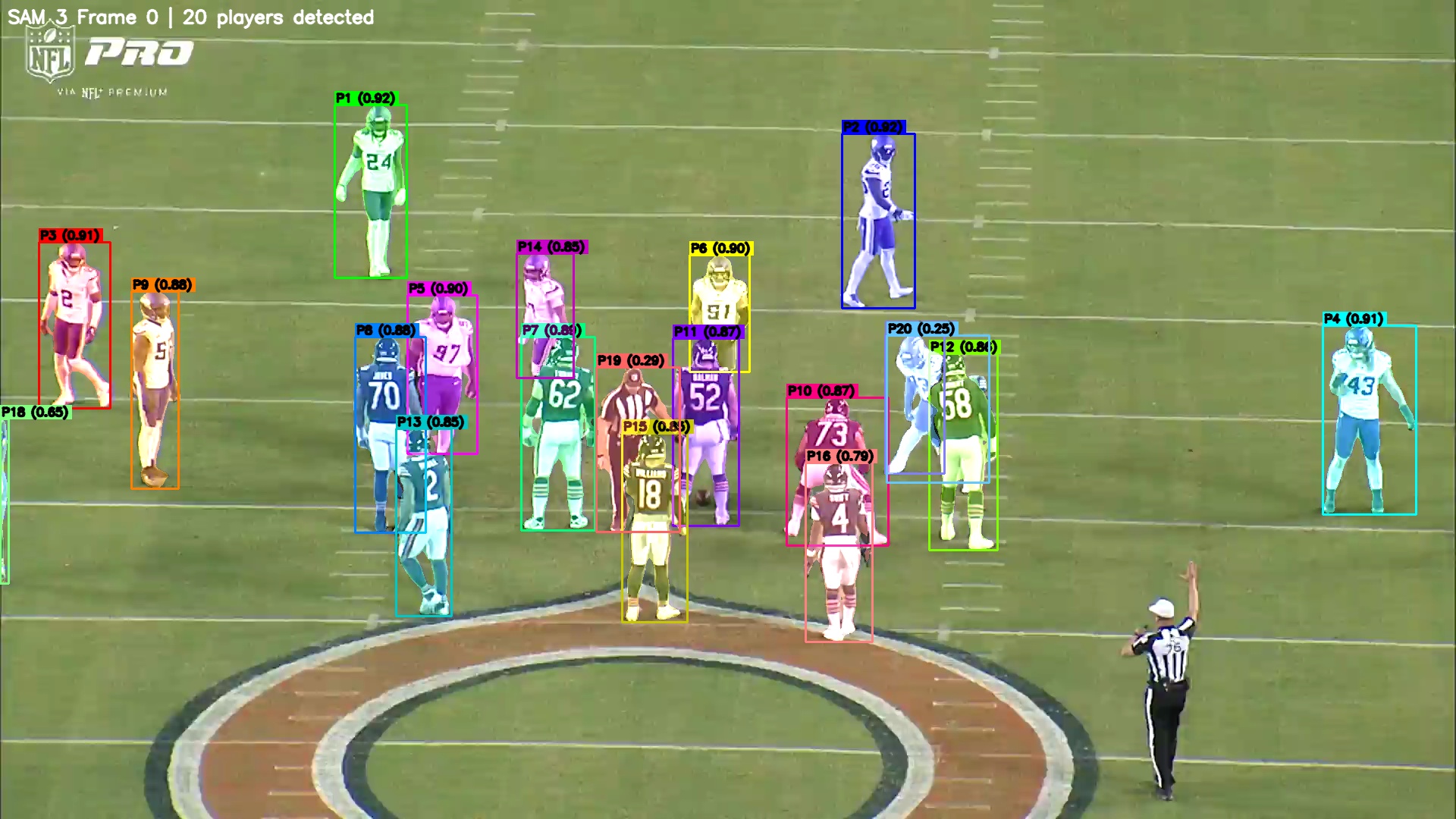

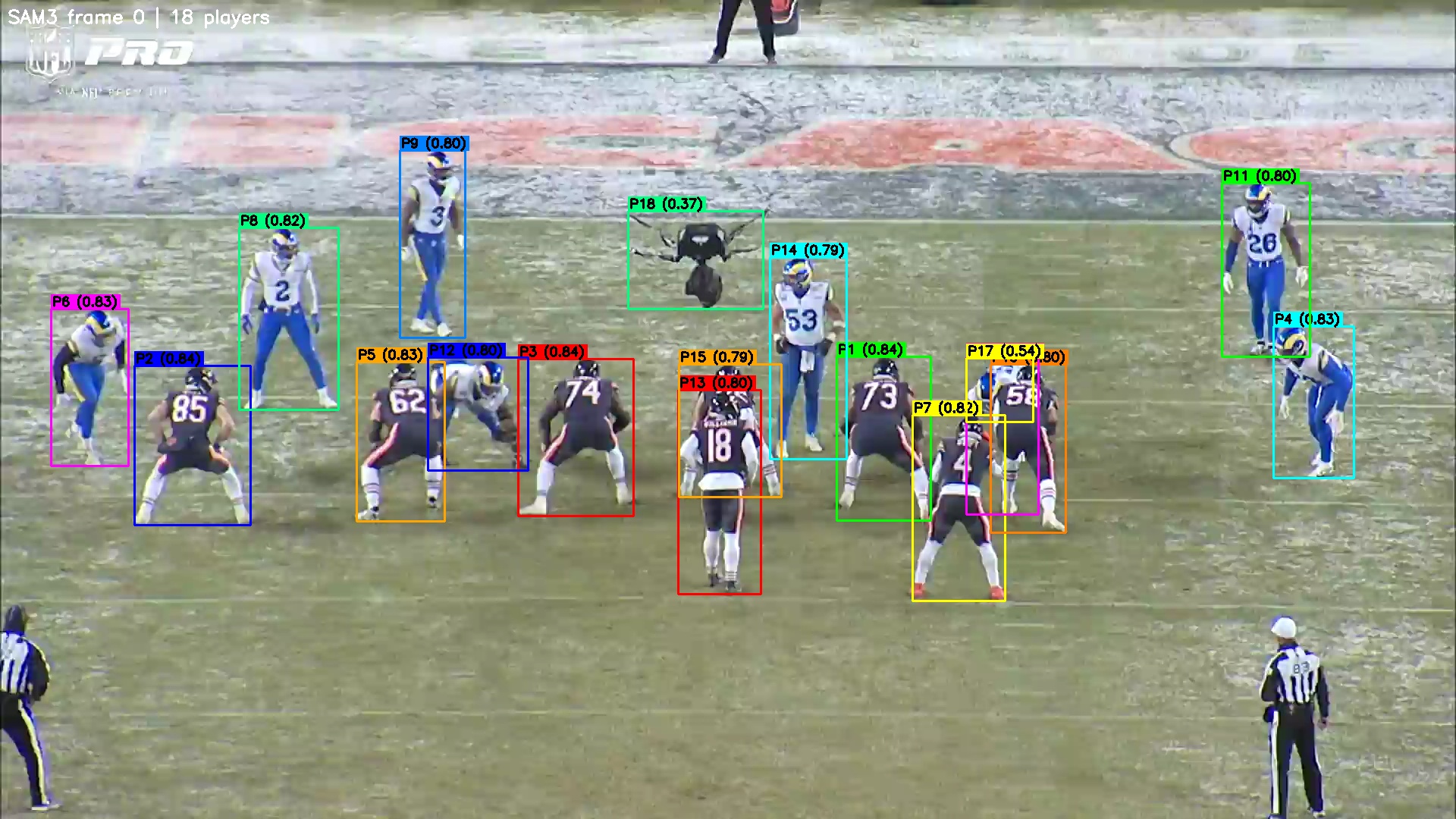

Phase one: SAM3 runs on frame 0 of the clip. It detects every player and saves a verification image with each one labeled P1, P2, P3, and so on alongside their confidence scores. The Bears vs Vikings Week 1 play detected 20 players. The Bears vs Rams endzone clip detected 18, which included an aerial camera rig it confidently flagged as P18 at 0.37 confidence. (Honestly, fair enough.)

Phase two: you look at the verification image, find your target by their position on the field, pass that index back to the script, and SAM2.1 takes over. SAM2.1’s video predictor propagates the bounding box across every frame using memory-based attention. It’s not re-detecting independently each frame. It tracks the mask through time, which handles camera pans and partial occlusion pretty well.

The first clean run was Caleb Williams against the Vikings, Week 1. 2015 frames at 59.9fps (about 33 seconds of footage), roughly 30 minutes of propagation on an M-series chip.

What Broke

SAM3 has its own video predictor that I tried first. Running it end-to-end on an M-series Mac mini ran into two problems: a rotary encoding shape mismatch crash on MPS, and memory pressure that made full-video propagation unreliable at this clip length. SAM2.1 handles the heavy lifting better on consumer hardware. So the final architecture is SAM3 for text-prompted detection on frame 0 only, then SAM2.1 takes over for everything after. SAM2.1’s hiera_small model runs fine on MPS with video and state offloaded to CPU.

There’s also an AttributeError that bites early: if you initialize the predictor wrong after swapping in the semantic model, you get SAM3SemanticModel has no attribute warmup. The fix is predictor.setup_model(model=sem_model) instead of direct assignment.

The other limitation right now is that player selection is manual. SAM3 spits out 15 to 25 player detections per frame and there’s no jersey OCR yet. You look at the verify image, find your target by their spot on the field, and pass that index back. It works, it’s just not automatic.

The Output

The script produces an annotated MP4 with the mask and bounding box overlaid on the original footage, plus a JSON file with per-frame bounding box coordinates and pixel counts.

The JSON is what matters for anything downstream. Route tracing, snap detection, player path visualization. The tracking holds up through camera pans and stays reasonably clean until the target gets buried in a pile at the line, which is basically every play, so there’s still work to do there.

What’s Next

Jersey OCR to auto-identify the target player is the obvious next step. After that, multi-player tracking (all 22 at once) and route tracing from the centroid paths. The eventual goal is clean per-play route data extracted from coaching film without hand-labeling anything.

The code is on GitHub if you want to run it yourself. You’ll need an NFL Pro subscription and an Apple Silicon or CUDA machine for the SAM2.1 propagation step.