Caleb Williams Looks Bad Until You Look Closer

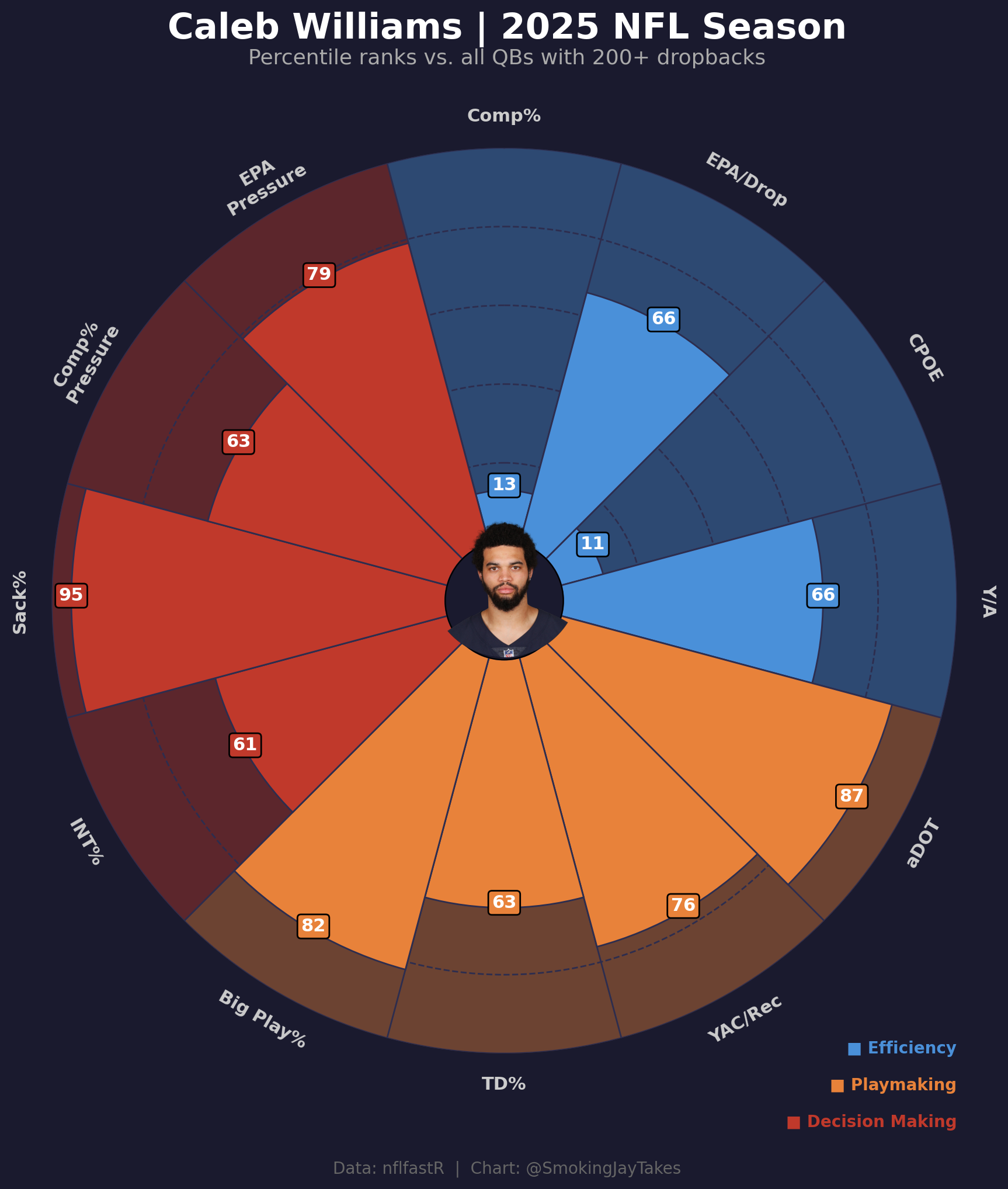

Caleb Williams finished 2025 with a completion percentage that ranked 13th percentile among qualifying QBs. CPOE was 11th percentile. If you stopped there, you’d write him off.

I didn’t stop there.

I built a pizza chart to look at his full percentile profile across 12 metrics, and the picture that comes out is a lot more interesting than the surface stats suggest.

The chart

The metrics are grouped into three buckets: efficiency (blue), playmaking (orange), and decision making (red). Each slice is a percentile rank against all QBs with 200+ dropbacks in 2025.

Here’s what’s actually going on.

Efficiency numbers are complicated

Comp% at 13th percentile. CPOE at 11th. Those are genuinely bad, and CPOE is the one that stings more. CPOE (completion percentage over expectation) accounts for throw difficulty. It’s asking: given where the receivers were, given the coverage, given the distance, how did this QB complete passes relative to what a model would predict? When both raw comp% and the difficulty-adjusted version are in the bottom 15%, you can’t explain it away with “he’s just taking hard throws.”

But EPA/drop sits at 66th percentile. Above average value generated per dropback. And Y/A is 66th too. That combination is the interesting wrinkle: he’s missing throws more than expected, but the throws he does connect on are generating real value. The outcomes aren’t uniformly bad. They’re unevenly distributed.

The playmaking numbers are legitimately good

aDOT at 87th percentile. That means Caleb is targeting the air yards as aggressively as almost anyone in the league. Throwing deep, throwing into traffic, not just dumping it off to win completion percentage.

Big play rate (completions of 20+ yards per attempt) is 82nd percentile. YAC per reception is 76th. TD% is 63rd.

This cohort makes sense given the aDOT. He’s taking shots, some of them connect for big plays, the receivers are creating yards after the catch. You lose on comp percentage and EPA in the aggregate, but you gain on chunk plays. That’s a specific QB profile, and it’s not an accident.

Sack rate is wild

95th percentile on sack rate. That means almost nobody was better at avoiding sacks in 2025.

For a guy who’s supposedly holding onto the ball, taking too many risks, not managing the game well, a 95th percentile sack rate doesn’t fit the narrative. He’s getting the ball out or scrambling before the pocket collapses, repeatedly, at an elite rate.

He holds up under pressure

EPA under pressure at 79th percentile. Comp% under pressure at 63rd. These aren’t bad. They’re actually above average. The guys who supposedly can’t perform when the heat is on don’t usually post 79th percentile EPA numbers on those plays.

The full picture

If you’re evaluating Caleb on comp% and CPOE alone, you’re reading a partial transcript. The full version says: deep shot taker, big play generator, elite sack avoidance, above-average under duress, with an above-average EPA/drop that coexists with genuinely poor completion rates.

Is that good enough? Honestly, I’m not sure. The CPOE at 11th is a real problem and not one you can explain away. But the underlying traits point toward a QB who is generating value through explosives and limiting the negative plays (95th percentile sack rate, not turning it over constantly). Whether he can clean up the accuracy while maintaining the downfield aggression is the whole question going into year three.

The chart doesn’t answer everything. It just makes the question harder to answer lazily.

How I built it

The whole thing runs on nfl_data_py for the play-by-play and mplsoccer’s PyPizza for the visualization. Headshot pulls straight from the nflverse roster parquet.

Data pipeline

First, pull play-by-play and find Caleb’s gsis_id:

import nfl_data_py as nfl

import pandas as pd

pbp = nfl.import_pbp_data([2025])

rosters = pd.read_parquet(

"https://github.com/nflverse/nflverse-data/releases/download/rosters/roster_2025.parquet"

)

caleb_roster = rosters[

(rosters['full_name'].str.contains('Caleb Williams', case=False, na=False)) &

(rosters['team'] == 'CHI')

]

caleb_id = caleb_roster.iloc[0]['gsis_id']

headshot_url = caleb_roster.iloc[0]['headshot_url']Then filter to QBs with 200+ dropbacks and compute the metrics:

from scipy.stats import percentileofscore

import numpy as np

dropbacks = pbp[pbp['qb_dropback'] == 1].copy()

dropback_counts = dropbacks.groupby('passer_player_id').size().reset_index(name='dropbacks')

qualifying_qbs = dropback_counts[dropback_counts['dropbacks'] >= 200]['passer_player_id'].tolist()

pass_plays = pbp[(pbp['pass_attempt'] == 1) & (pbp['two_point_attempt'] == 0)].copy()For each qualifying QB I compute 12 stats: comp%, EPA/dropback, CPOE, Y/A, aDOT, YAC/rec, TD%, big play%, INT%, sack%, comp% under pressure, and EPA under pressure. Two of them (INT% and sack%) are inverted before percentile ranking so higher is always better.

metrics = [

('comp_pct', 'Comp%', False),

('epa_dropback', 'EPA/Drop', False),

('cpoe', 'CPOE', False),

('yards_att', 'Y/A', False),

('adot', 'aDOT', False),

('yac_rec', 'YAC/Rec', False),

('td_pct', 'TD%', False),

('big_play_pct', 'Big Play%', False),

('int_pct', 'INT%', True), # lower is better → invert

('sack_pct', 'Sack%', True), # lower is better → invert

('comp_under_pressure', 'Comp%\nPressure', False),

('epa_under_pressure', 'EPA\nPressure', False),

]

values = []

for col, label, inverted in metrics:

raw_val = caleb[col]

col_vals = qb_df[col].dropna().values

pct = percentileofscore(col_vals, raw_val)

if inverted:

pct = 100 - pct

values.append(round(pct))Building the pizza

PyPizza handles most of the heavy lifting. I passed three slice colors to separate the metric groups visually:

from mplsoccer import PyPizza

slice_colors = [

"#4A90D9", "#4A90D9", "#4A90D9", "#4A90D9", # efficiency

"#E8823A", "#E8823A", "#E8823A", "#E8823A", # playmaking

"#C0392B", "#C0392B", "#C0392B", "#C0392B", # decision making

]

baker = PyPizza(

params=params,

background_color="#1A1A2E",

straight_line_color="#2D2D4E",

straight_line_lw=1,

last_circle_color="#2D2D4E",

last_circle_lw=1,

other_circle_color="#2D2D4E",

other_circle_lw=1,

inner_circle_size=15,

)

fig, ax = baker.make_pizza(

values,

figsize=(10, 10.5),

color_blank_space="same",

slice_colors=slice_colors,

value_colors=["#FFFFFF"] * 12,

value_bck_colors=slice_colors,

blank_alpha=0.4,

kwargs_slices=dict(edgecolor="#2D2D4E", zorder=2, linewidth=1),

kwargs_params=dict(color="#CCCCCC", fontsize=11, fontweight="bold", va="center"),

kwargs_values=dict(

color="#FFFFFF", fontsize=11, fontweight="bold",

bbox=dict(edgecolor="#000000", boxstyle="round,pad=0.2", lw=1)

),

)The headshot goes in the center using ax.inset_axes. Getting it to sit exactly at the pizza’s center took some fiddling (the axes center and the pizza center don’t perfectly overlap):

from PIL import Image, ImageDraw

from io import BytesIO

from urllib.request import urlopen

import numpy as np

response = urlopen(headshot_url)

img = Image.open(BytesIO(response.read()))

# Circular crop

size = min(img.size)

mask = Image.new('L', (size, size), 0)

draw = ImageDraw.Draw(mask)

draw.ellipse((0, 0, size, size), fill=255)

left = (img.width - size) // 2

top = (img.height - size) // 2

img_cropped = img.crop((left, top, left + size, top + size))

img_cropped = img_cropped.convert('RGBA')

circ_headshot = Image.new('RGBA', (size, size), (0, 0, 0, 0))

circ_headshot.paste(img_cropped, mask=mask)

# Place at pizza center

pizza_cx, pizza_cy = 0.5, 0.52

img_frac = 0.165

ax_img = ax.inset_axes(

[pizza_cx - img_frac / 2, pizza_cy - img_frac / 2, img_frac, img_frac]

)

ax_img.imshow(np.array(circ_headshot))

ax_img.axis("off")

ax_img.set_zorder(5)Data from nflfastR via nfl_data_py. Full script on my GitHub.